Researchers work to solve 5G network problems when it matters: right now

5G can enable smart cities, virtual realities, and self-driving cars — but will these applications be convenient and safe to use if network connection is delayed? Commonwealth Cyber Initiative researchers from Virginia Tech developed a methodology that provides optimal solutions to network problems on the fly and in real time.

The fifth generation of mobile network (5G) is bringing more applications, devices, and users into network operations. But spiking demand can stress local networks, creating bottlenecks that are narrowed by safety-critical or other high-priority tasks that need to happen as soon as possible.

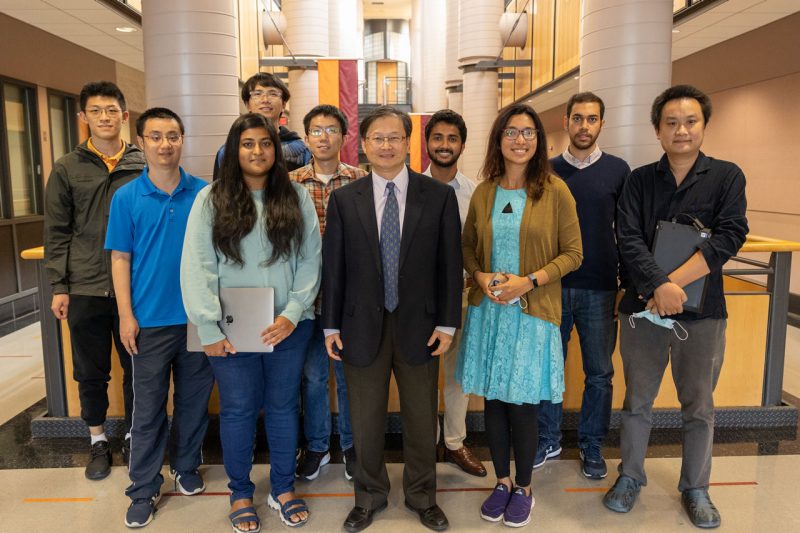

Computer engineers such as Virginia Tech’s Tom Hou have dedicated their careers to fine-tuning network parameters and components to get ever closer to peak performance — an endeavor further complicated by real-time demands.

“The holy grail of my research has always been timing,” said Hou, the Bradley Distinguished Professor of Electrical and Computer Engineering in the College of Engineering.

Timing constraints have forced computer engineers to modify their algorithms to stay within suboptimal network thresholds, which limits functionality and throttles performance.

This changed in 2018, when Hou’s research group hit upon a methodology that pulled real-time into range.

“This was a major breakthrough,” said Hou. “With support from the Commonwealth Cyber Initiative in Southwest Virginia, we elevated network optimization to a whole different level: solving problems in the field in real time.”

Ultra-high precision for ultra-low latency

In the context of 5G, timing is tied up with the concept of latency. Latency refers to time duration, or how long it takes to complete a certain task or step in a process. Minimizing latency is an attempt to reduce delay. When it comes to 5G, a delay of even a few milliseconds can make a difference to an industrial automation system or a power grid, for instance.

As a delay stretches, not only will user experience degrade, but risk to device, information, or safety increases.

“Think about industrial automation or autonomous driving, which require information to be transported over different systems very quickly to ensure tight synchronization,” said Hou. “Reaction time on the road or in a warehouse is critical to preventing accidents, making latency of utmost importance.”

To deliver that kind of end-to-end latency on the order of millisecond, scheduling from the 5G base station has to be on the same order or even lower.

How it started

In 2018, Hou and his team were designing a system to meet the stringent timing requirements of new radio access technology for the 5G mobile network. To support applications with ultra-low latency, the minimum timing resolution for optimal 5G New Radio performance was capped at 125 microseconds — almost 10 times faster than what was possible with 4G LTE.

Up until then, no one had been able to deliver optimal scheduling in that interval.

The Virginia Tech team proposed a scheduling algorithm that incorporated a graphics processing unit (GPU) — a specialized circuit that uses parallel computing to accelerate workloads in high performance computing.

Parallel computing isn’t a new technology. A supercomputer processes computations in parallel with thousands of central processing units, but it’s expensive, cumbersome, and can’t be accomplished locally — by the time a base station outsources a task to the cloud and receives the results, it’s far too late to meet real time scheduling needs.

Originally designed for graphics rendering, a GPU isn’t in the same league as a supercomputer in terms of processing capability. It wasn’t designed for scientific computation or solving complex optimization problems, but when coupled with Hou’s new scheme, it doesn’t have to be.

Hou and his team developed a multistep methodology that breaks down a big problem into a smaller set of sub problems and then zeros in on the sub problems that are likely to yield the most promising results. For this manageable set of small problems, custom solutions can be developed by a GPU processing in parallel.

“With this technique, even a low-end GPU can find near-optimal solutions within the sub-millisecond time window,” said Hou.

Hou’s team’s innovation rocked the field of wireless network optimization.

“Probably the most important feature of 5G is the ability to communicate with low latency, and Professor Hou’s work makes this feasible,” said Jeff Reed, the Commonwealth Cyber Initiative's chief technology officer and the Willis G. Worcester Professor of electrical and computer engineering at Virginia Tech.

GPU manufacturer Nvidia showcased Hou's work, which was carried out in collaboration with fellow Commonwealth Cyber Initiative researcher Wenjing Lou in computer science. The invention was awarded a U.S. patent as it was applied to 5G schedulers. But this was just the beginning.

“We thought — wait a minute, there’s more than just scheduling for 5G problem,” said Hou. “We identified the key steps, theorized the technique, and implemented it to solve other wireless networking and communications problems with similar mathematical structure.”

Scaling up to secure autonomous vehicles

Armed with a process that brings real-time solutions into reach, Hou’s research group was ready to apply it to complex problems in different domains. With continued support from the Commonwealth Cyber Initiative in Southwest Virginia, the team is tackling radar interference in autonomous vehicles.

In addition to camera and lidar, autonomous vehicles monitor road conditions with radar because it’s not finicky about weather or lighting conditions. Day, night, rain, or snow — radar is robust.

It is, however, susceptible to interference. A radar bounces a signal off nearby objects and then measures the reflected signal to determine what’s on the road and around the vehicle. When there are too many radars on the road, signals bounce around willy-nilly, compromising a radar’s normal capability.

“Such radar-to-radar interference offers a relatively easy way to unleash a cyberattack on an autonomous vehicle and compromise safety,” said Hou.

Hou is applying the methodology to sort through which signals should matter to an autonomous vehicle, mitigating high levels of interference in real time, in-vehicle, and with an affordable GPU.

The application of the scheduling algorithm as applied to radar mitigation will be published through IEEE Radar Conference proceedings in early spring, and a patent has been filed with Virginia Tech Intellectual Properties. The Commonwealth Cyber Initiative provides funds for the translation of research into practice through programs such as “Innovation: Ideation to Commercialization” and patent support costs.

Edge computing and elsewhere

Hou and his team also are applying their technique to task-offloading for edge computing, which involves determining which tasks should be processed on a local device and which should be offloaded to the 5G base station.

“Our new real-time optimization methodology has many, many applications. It’s opened up a new life, certainly for me, but also for other researchers doing network optimizations,” said Hou. “We keep seeing new places where this methodology can be applied, and in many cases, finding groundbreaking solutions along the way.”